IBM develops ESS 3200-based Nvidia DGX SuperPOD – Blocks and Files

[ad_1]

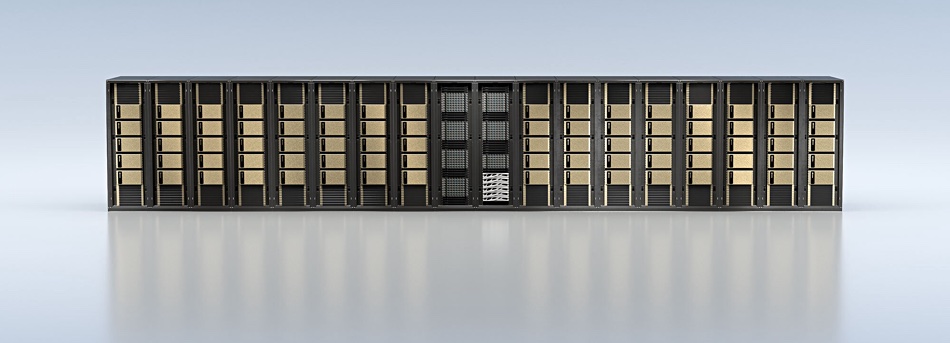

IBM is developing an Nvidia DGX SuperPOD system based on its ESS 3200 and Spectrum Scale storage server. It has an updated reference architecture for DGX PODs and published reference data for the ESS 3200 and a DGX POD.

A DGX POD is a benchmark architecture for AI processing and contains up to nine Nvidia DGX-1 servers, twelve storage servers (from Nvidia partners), and three network switches to support training and inference Single-node and multi-node AI models using NVIDIA AI Software. A SuperPOD is an updated and larger POD architecture, which starts at 20 Nvidia DGX A100 systems and scales up to 140 of them.

Douglas O’Flaherty, Global Ecosystem Leader for IBM Storage, wrote a blog where he said, “IBM Storage Showcases Latest Magnum IO GPUDirect Storage (GDS) Performance with New Benchmark Results, Announces Benchmark Architecture update. (RA) developed for 2, 4 and 8 node Nvidia DGX POD configurations. Nvidia and IBM have also committed to deliver a DGX SuperPOD solution with IBM Elastic Storage System 3200 (ESS 3200) by the end of the third quarter of this year.

He writes that “with the future integration of the upgradeable ESS 3200 into Nvidia Base Command Manager, including support for Nvidia Bluefield DPUs, networking and multi-tenancy will be simplified. “

The ESS 3200 is a fully NVMe 2U × 24-bay SSD system supporting up to eight InfiniBand HDR-200 or Ethernet-100 ports.

O’Flaherty says IBM has “released updated IBM storage reference architectures with NVIDIA DGX A100 systems,” he told us, “to reflect the increased capacity of the ESS 3200. (2x that of 3000) “. Reference Architecture uses Nvidia’s CPU / DRAM bypass GPUDirect scheme to configure RDMA links between ESS 3200 storage and DGX-A100 GPUs so that Spectrum Scale can read and write to these GPUs in the DGX POD at high speed .

According to an IBM 2020 Reference Architecture (RA) document and using the Fio bandwidth tests enabled by GDS, “the total read throughput of the system scales to provide[y] 94 Gb / s with two ESS 3000 units and eight DGX A100 systems â€, and“ the total system write throughput reaches 62 Gb / s with two ESS units and eight DGX A100 systems â€.

The ESS 3200 is much faster than the ESS 3000 and this edition of the RA did not use GPUDirect storage, only using the storage fabric as per NVIDIA guidelines.

Views of the DGX POD network

There are two views of the network in a DGX POD; the view of the storage structure and the view of the calculation structure.

The storage fabric / network consists of 2 x NICS HDR / 200 GbitE connected via the CPU, while the compute fabric is 8 x NICS HDR tightly connected to the GPU. The IBM ES3200 reference architecture storage structure has a theoretical maximum of 50 Gb / s (2x HDR) for a single DGX and effectively delivered 43 Gb / s, 86% of the theoretical maximum.

The computational structure on a single DGX has a theoretical maximum of 200 Gb / s (8x HDR); with GPUDirect Storage, a pair of ESS 3200s demonstrated 191 Gb / s or 95.5% of theoretical maximum, much faster than the 94 Gb / s of the ESS 3000.

Boot Note 1. Nvidia GPUDirect release notes for May 2021 indicate that support has been added for Excelero’s NVMesh devices and ScaleFlux compute storage. Support has also been added for Mellanox OpenFabrics Enterprise Distribution for Linux (MLNX_OFED) which can use the distributed file systems of WekaIO (WekaFS v3.8.0), DDN (Exascaler v5.2) and VAST Data (VAST v3.4). Other supported block and file system access systems are ScaleFlux CSD, NVMesh, and PavilionData.

[ad_2]